Research Article, J Comput Eng Inf Technol Vol: 6 Issue: 4

A Framework for Performance Study of Micro Computers in a Virtual Environment (A Case Study of AMD and Intel Processors)

Souley Boukari*, Abubakar Tafawa Balewa and Zamakwa Ibrahim Dibal

Department of Mathematical Sciences Abubakar Tafawa Balewa University, Bauchi, Nigeria

*Corresponding Author : Souley Boukari

Lecturer, Department of Mathematical Sciences Abubakar Tafawa Balewa University, Bauchi, Nigeria

E-mail: bsouley2001@yahoo.com

Received: April 21, 2017 Accepted: July 18, 2017 Published: July 23, 2017

Citation: Boukari S, Balewa AT, Dibal ZI (2017) A Framework for Performance Study of Micro Computers in a Virtual Environment: A Case Study of AMD and Intel Processors. J Comput Eng Inf Technol 6:4. doi: 10.4172/2324-9307.1000178

Abstract

Virtualization is used in cloud as the underlying technology for managing and improving the utilization of data and computing centre resources by server consolidation. Performance is an important quality measure for any organization to accomplish its operations efficiently. IT professionals have to identify what their performance requirements are, for example some hardware platforms do consider virtualization; whereas others operate as a standalone. Also some applications and operating systems may not be compatible with some hardware. While some systems need substantial CPU processing power, others may need significant I/O requirements. Many research works were done on the performances of microcomputers in a virtual environment but most of them used the traditional method of powering the virtual system and did not consider the used and available memories. This work did not only considered the load on CPU and Memory but also the used and available memories as well as developed an automated method of running the virtual system from the hypervisor to the hardware monitor level for speed and accuracy. An analytical model was developed and simulated using Bootstrap, Html, and Php while MySQL was used as database. Performance measures of utilization for both the CPU and memories of the two mother boards AMD and Intel were evaluated; the result revealed that Intel yields better results of 50% as against 63% for AMD when both were in physical environment, and 51% as against AMD’s 52% when in virtual environment for the diverse workloads. But the memory of AMD gives better results of 40.9% as against Intel’s 66.4% when in the physical environment and 66.3% for AMD as against Intel’s 68.7% when compared in the virtual environment. This means that the type of memories has effect on the performances of a server. Virtualization can be recommended to schools and organizations that are having constrains in buying computer hardware.

Keywords: Advanced micro devices; Intel; Virtualization; Performance, Utilization

Introduction

Virtualization is not a new concept, and has been in use for decades in different ways. However, virtualization is more popular now than ever because it is now an option for a larger group of users and system administrators than ever before. There are several general reasons for the increasing popularity of virtualization as stated [1].

The power and performance of commodity hardware continues to increase. Processors are faster than ever, support more memory than ever and the latest multi - core processors literally enable single systems to perform multiple tasks simultaneously. These factors combined to increase the chance that your hardware may be underutilized. Virtualization provides an excellent way of getting the most out of existing hardware while reducing many other IT costs.

The integration of direct support for hardware - level virtualization in the latest generations of Intel and AMD processors, motherboards, and related firmware has made virtualization on commodity hardware more powerful than ever before.

A wide variety of virtualization products for both desktop and server systems running on commodity hardware have emerged, are still emerging, and have become extremely popular. Many of these are open source software and are attractive from both a capability and cost perspective.

More accessible, powerful, and flexible than ever before, virtualization is continuing to prove its worth in business and academic environments all over the world. Virtualization provides way of relaxing the foregoing constraints and increasing flexibility. When a system (or subsystem), for example a processor, memory, input/output device, is virtualized, its interface and all resources visible through the interface are mapped onto the interface and resources of a real system actually implementing it [2]. Virtualization enables users to make the most effective use of existing computer systems, optimizing data centre by reducing the number of physical machines that need to be deployed, managed, and maintained. Although minimizing the number maximizes capital expenses and operating costs is important, the reliability, performance, and availability of the computing services that your enterprise requires are the primary concerns of any data centre [1].

Virtualization can be viewed as a technique for running more than one operating system instance on a single computer simultaneously, making one computer appear as two, three or several more computers.

This paper is geared towards discovering and analysing the performances of microcomputers in a virtual environment. Below are some of the problems this research work will attempt to proffer solution to:

The choice of hardware for the virtual infrastructure had always been a core issue as the performance degradation of virtual computing had been observed in the resent times.

The inability of users to quantify and predict the performance of the system in virtual machines both in times of processor and memory performances.

The inability to tell how the virtual machine affects the virtual memory of the system when the virtual system is processor busy as well as memory busy.

Some physical servers do not fully maximize their processing capabilities, often running well below the percentage of CPU usage deemed efficient. The addition of more physical servers also means an increase in costs. Physical servers can consume massive amounts of energy and create tremendous heat, leading to skyrocketing energy bills. Disaster recovery is also a major concern. With files stored on physical hardware, it can quickly become expensive to back up data and information in multiple locations. One alternative deployment method is one of today’s most powerful industry trends virtualized environments.

With the introduction of new operating systems in the market, some hardware are not compatible with some of the operating systems thus the need for single users to embrace virtualization which will enable them run multiple operating system on their single machine and vice versa also necessitate this work.

Related work

A significant level of studies had been carried out in the field of virtualization. Different aspects had also been addressed where one of the core interests was the performance trade-offs between physical and virtual server. Some studies had found a significant level of fluctuation between the performance of virtual servers and the physical servers where the physical servers were 50% to 100% efficient in performance than that of the virtual servers [3].

It was also found that the virtualization performance was dependent on the underlying virtualization technique or architecture as a different virtualization platform exhibited different levels of efficiency and performance level. It was also argued that the performance of the virtual servers was largely dependent on the total number of virtual operating systems that were being used on one single virtualized platform [3-5]

Again it was discovered that, if planned and implemented properly, the virtualization approach and thus the virtual servers could potentially help the computing infrastructures to facilitate innovative computing like cloud computing without compromising the performance and thus the virtualization techniques were becoming more and more popular in data centres and in the field of cloud computing [6-9].

Similar work was also carried out in which load against time on both the memory utilization and CPU utilization and their average percentages were considered [10,11]. SunGard and Eric [12-14] used the traditional way of powering the virtual system from the hypervisor to the open hardware monitor for their test cases which can be difficult to use, it takes a lot time to set up and can also lead to errors. Their work did not also look at how different models of memories and processors can affect the performances of the systems when it comes to virtualization. Another limitation of their work is the use of single processor type. Also, the same size of memory (32G) was used throughout the experiment [12,14] which cannot tell if and how memory size affects virtualization. The framework consists of four components namely input, workload analysis or monitor, service and performance analysis [10,14]. They also did not consider the areas of used and available memories in which countries with low internet facilities can have issues using this model and users can have problems of processor choice. This can lead to system crash. SunGard and Eric [10,12] used the cloud for their experiment but did not consider simple users who are currently facing a lot of challenges with hardware compatibility.

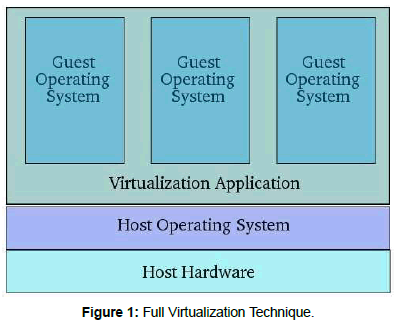

In full virtualization, the virtual machine simulates enough hardware to allow an unmodified guest operating system to be run in isolation. This approach was pioneered in 1966 with the IBM mainframes. Full virtualization fully abstracts the guest operating system from the underlying hardware (completely decoupled). The guest operating system is not aware it is being virtualized and requires no modification. Full virtualization offers the best isolation and security for virtual machines, and allows simple procedures for migration and portability as the same guest operating system instance can run virtualized or on native hardware. A full virtualization is shown in Figure 1 below.

In case of server consolidation, many small physical servers are replaced by one larger physical server to increase the utilization of expensive hardware resources. Each operating system running on a physical server is converted to a distinct operating system running inside a virtual machine, this way a single large physical server can host many guest virtual machines [14,15].

Methodology

System analysis: Intel and AMD

The current Intel and AMD are not directly compatible with each other, but serve largely the same functions. Either will allow a virtual machine hypervisor to run an unmodified guest operating system without incurring significant emulation performance penalties.

AMD processors have a 10 step executions process which doesn’t allow it to be as fast as a clock. Intel on the other hand has a 20 step execution process which allows much higher clock speeds but fewer operations per clock.

Problem associated with existing systems

Despite the fact that computer data is more valuable than the computer system itself, computer users have continued to lose valuable data, even those that have thought of using virtualization to safe data are afraid of losing it.

Current operating systems are not compatible with the old hardware especially the output devices like printers thus not saving cost.

Several hardware is available in the market for virtualization. However, the ratings have been inconsistent, therefore users still find it difficult to determine which microprocessors and size of memory is the best, which is key in virtualization. Some users chose systems with high processors not minding the level of the memory thus; when they encounter graphical activities they begin to have challenges with the display. Furthermore, concerning problems associated with existing systems in School Management Software, it was discovered that since they are not using the concept of virtualization most of them are not maximizing their hardware and software facilities. They only view the systems in terms of physical usage but have no much knowledge about virtualization hence they have been underutilizing the systems. As a result of it they spend a lot of money on hardware and managing their different applications they also consume a lot power as a result costing them more to keep their systems running. Moreover, there are some Problems associated with the Current Operating Systems which also include, compatibility, flexibility, isolation, security and hardware utilization.

Proposed system and architecture

The architecture is designed to take advantage of the trend of multi core CPUs. In order to ensure highest performance, a physical processor core was dedicated for the virtual CPU of the system that contains the device drivers; 512Mb of the physical memories were dedicated to the virtual once. This is to ensure optimal schedule between the service VM with the full virtualization device and the device driver. Figure 1 shows the architecture of the proposed system.

System model: The system model is composed of three models: the functional model, the object model and the dynamic model. The functional model consists of the Physical environment and Virtual environment while the object model is made up of the graphical activities and database application (school management system). The dynamic model is the processor and memory

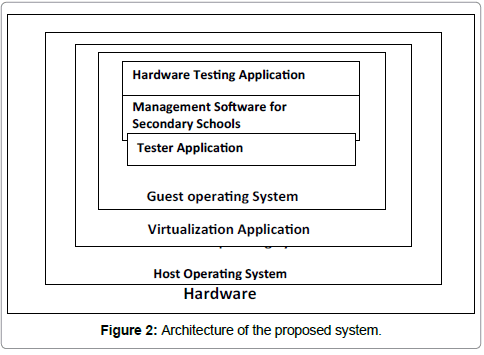

Proposed analytical model: The analytical model is depicted in Figure 2 below.

This model is composed of the following major components:

Host operating system:- is the operating system that is running on the physical system in our case we used windows7

Virtual machine monitor: - is the hypervisor or virtual machine monitor that is used. In our case we used VMware.

Guest OS:- are the virtual machines we run on the hypervisor. Windows XP is considered in this work.

Application software and graphic movie: - are the software used as loads on the system to make it processor busy and test the memory performance.

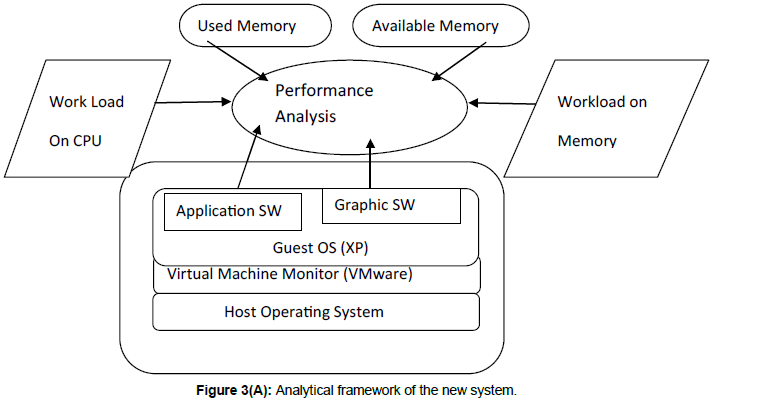

Performance analysis (Open hardware monitor):- is the software that analysis the performance of the hardware. We used the open hardware monitor in the new system we build to analyse the load on CPU, Load on memory, the used and available memories.

The analytical framework is given in Figure 3 (A) below.

System design

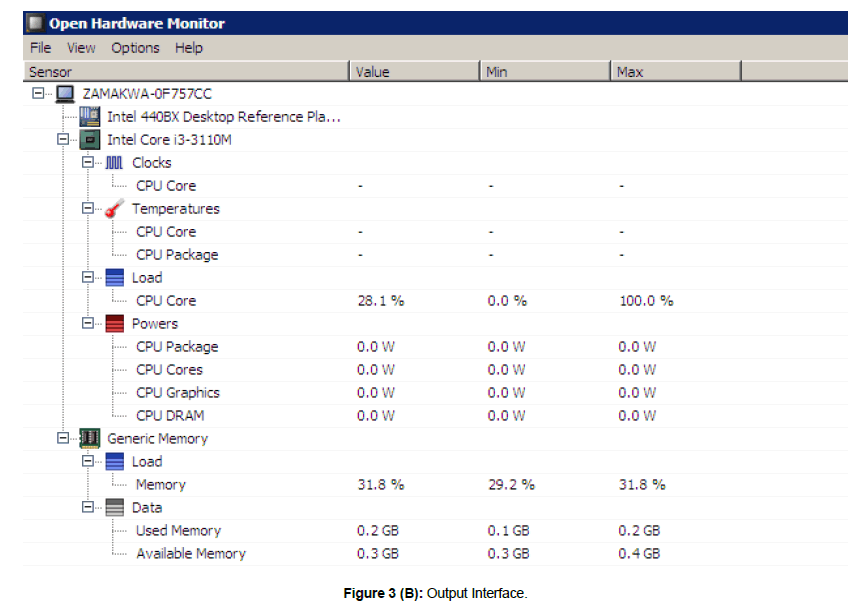

Design of interfaces: Figure 3(B) below shows the output interface of the new system showing the load on memory, load on processor, used and available memories.

Input Interface: The input data of the system include: 2G Ram, 4G Ram, graphical movie and dual core processors which hosts the thesis and used to test the performance of the AMD and Intel mother boards in the virtual system.

Program design: The algorithms for the system are given algorithms 1and 2.

Algorithm 1: VM performance Optimization

1 Input: Load on system Output: max and min load,graph , max and min used, graph, max and min available graph

2 foreach load in hostList do

3 if isHostmax (host) then

4 vmsTodisplay.add(getVmsTodisplay FrommaxloadedHost(host)

5 maxMap.add(getNewVmPlacement(vmsTodisplay))

6 vmsdisplay.clear()

7 foreach load in hostList do

8 if isHostminloaded (host) then

9 vmsTodisplay.add(host.getVmList()

10 maxminMap.add(getNewVmPlacement(vmsTodisplay))

10 maxminMap.add(getNewVmPlacement(vmsTodisplay))

12 foreach load in hostList do

13 if isHostmax (host) then

14 vmsTodisplay.add(getVmsTodisplay FrommaxusedHost(host)

15 maxMap.add(getNewVmPlacement(vmsTodisplay))

16 vmsdisplay.clear()

17 foreach used in hostList do

18 if isHostminused(host) then

19 vmsTodisplay.add(host.getVmList()

20 maxminMap.add(getNewVmPlacement(vmsTodisplay))

21 return display graph

22 foreach available in hostList do

23 if isHostmax (host) then

24 vmsTodisplay.add(getVmsTodisplay FrommaxavailableHost(host)

25 maxMap.add(getNewVmPlacement(vmsTodisplay))

26 vmsdisplay.clear()

27 foreach available in hostList do

28 if isHostminavailable (host) then

29 vmsTodisplay.add(host.getVmList()

30 maxminMap.add(getNewVmPlacement(vmsTodisplay))

31 return display graph

Algorithm 2: Back up Test Result

Define ending snapshot of the test, e

Define tias begin snapshot for 600 s interval, Cias the corresponding server load value

Define ti+1 as end snapshot for 600 s interval, Ci+1 as the corresponding server load value

For s>= ti and ti<e

If (Ci does not differ from Ci+1 by 10%)

Record the corresponding OHM Elapsed Time, Si

ti=ti+1

done.

Results and Discussion

Results

The results of data collected via sample questionnaires that were distributed to two secondary schools were analysed. This necessitated the building of the school management software that was used in testing the virtualization processes alongside the graphic movie. They both were used for making the processor busy as well as adding load on the memories. The technologies and programming tools used include, VMware, Open Hardware Monitor, WAMP, MySQL, PHP, Html and Bootstrap. For the purpose of testing, 4 different test cases were carried out. The two classes of systems with the different input data were tested. The input data for the various test cases are listed below:

Test case 1

CPU utilization for AMD Mother Board with 4G RAM

Memory utilization for AMD mother board with 4G RAM

Memory utilization for AMD mother board with 4G RAM

Available memory in Gigabyte for AMD board with 4GB RAM

Test case 2

CPU utilization for AMD board with 2G RAM

Memory utilization for AMD board with 2G RAM

Used memory in Gigabyte for AMD board with 2GB RAM

Available memory for AMD board with 2G RAM

Test case3

CPU utilization for Intel mother board with 4G RAM

Memory utilization for Intel mother board with 4G RAM

Used memory in Gigabyte for Intel mother board with 4G RAM

Available memory in Gigabyte for Intel board with 4 GRAM

Test case 4

CPU utilization for Intel board with 2G RAM

Memory utilization for Intel board with 2G RAM

Used memory in Gigabyte for Intel board with 2GB RAM

Available memory for Intel board with 2G RAM

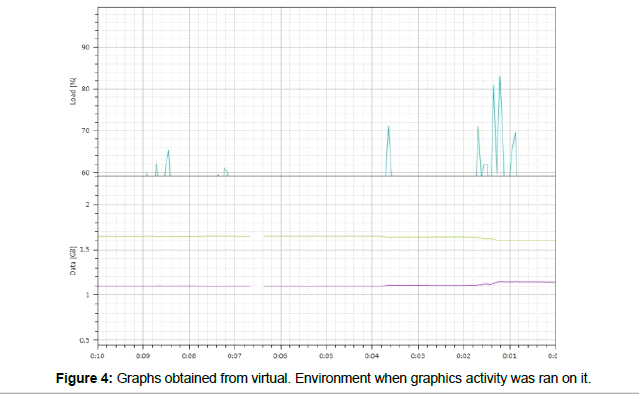

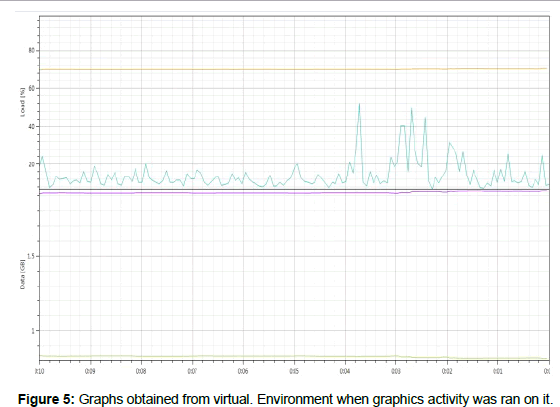

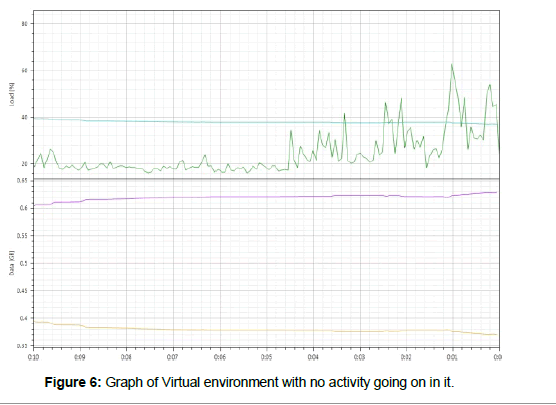

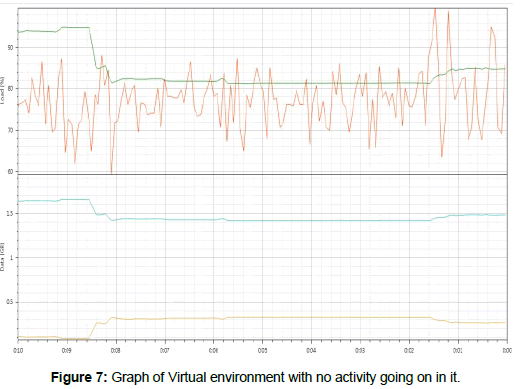

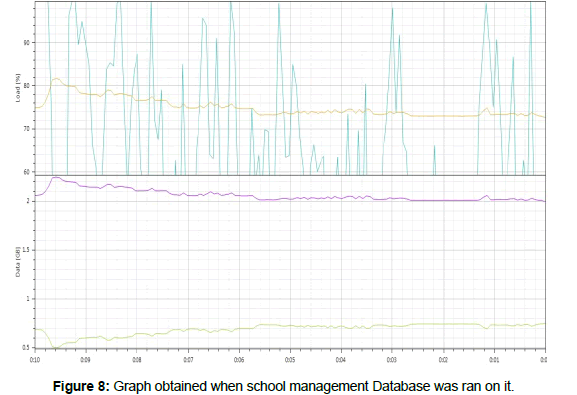

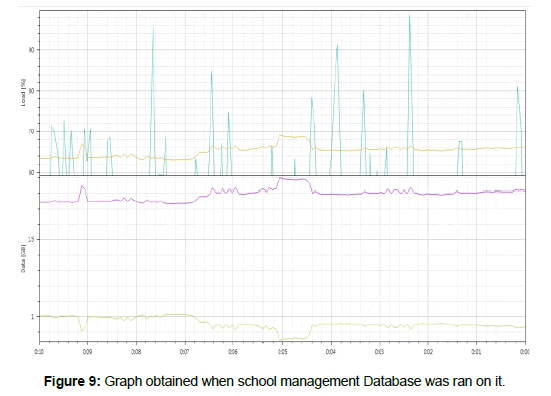

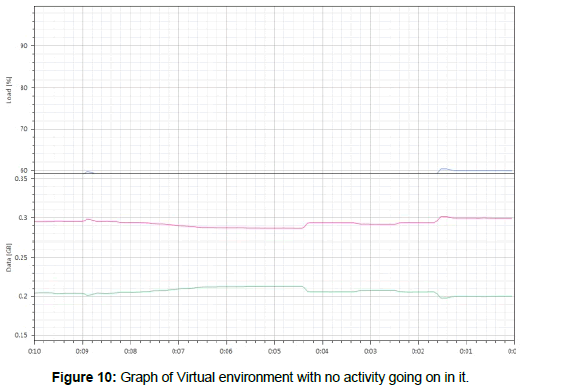

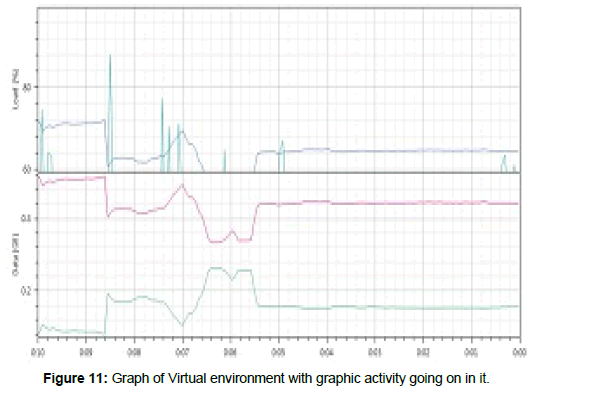

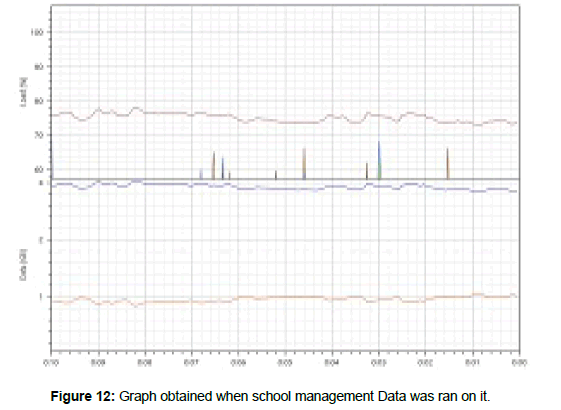

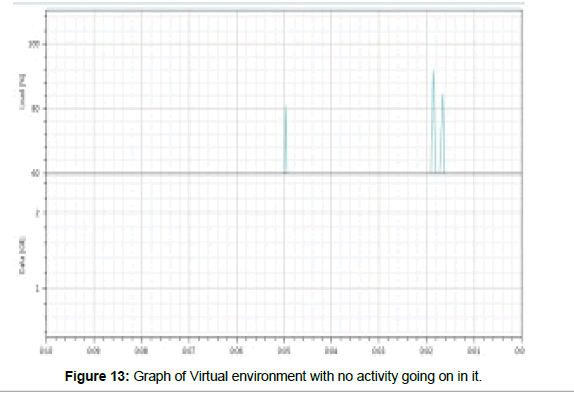

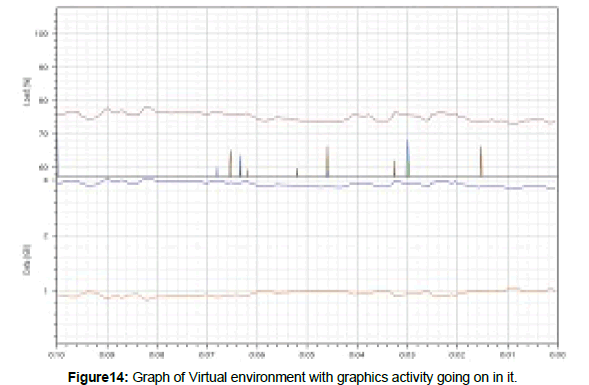

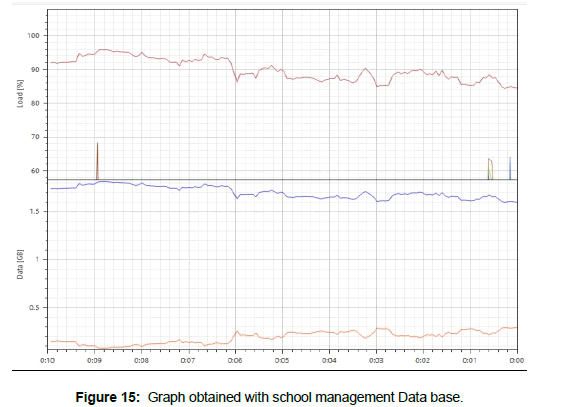

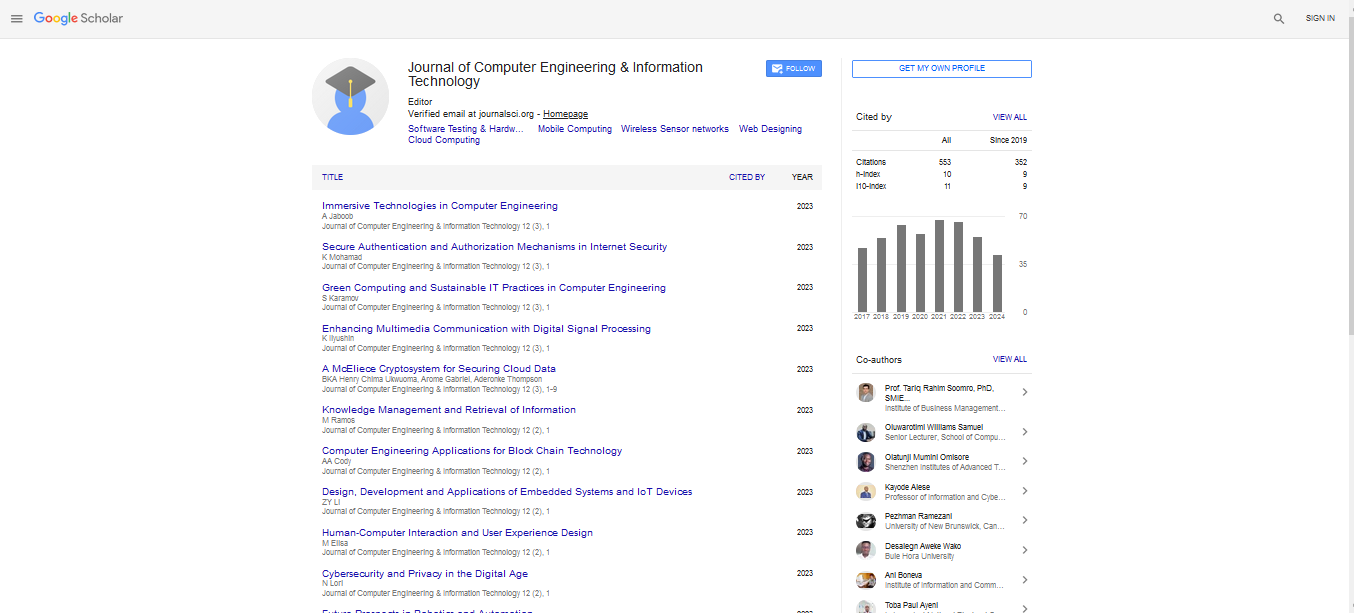

The simulation results obtained for the 4 test cases are as presented in Figures 4-15 and summarized in Tables 1 and 2. Eric, Tesi and Espen suggested that the best result (metric) is the average performance of the CPU load as well as the average performance of the Memory load. Tables 1 and 2 below show the summary of the average performances for both the AMD and Intel boards:

| Variables | AMD 4G RAM (%) |

Intel 4G RAM (%) |

AMD 2G RAM (%) |

Intel 2G RAM (%) |

|---|---|---|---|---|

| Processor Load | 44.6 | 51.2 | 71.1 | 51.2 |

| Memory Load | 81 | 59 | 91.7 | 68.7 |

| Processor Load | 63.1 | 57.1 | 36.5 | 59 |

| Memory Load | 75.9 | 56.7 | 71.2 | 84 |

| Processor Load | 44.6 | 52.3 | 73.9 | 52.3 |

| Memory Load | 81 | 65.8 | 69.2 | 58.9 |

| Processor Load | 63.1 | 50 | 72.3 | 53.1 |

| Memory Load | 40.9 | 66.4 | 88.4 | 62.6 |

| Processor Load | 52.3 | 51.2 | 54.6 | 50.4 |

| Memory Load | 66.3 | 68.7 | 87.7 | 89.7 |

Table 1: The average performance of processor load and memory load of Intel and AMD both in percentage.

| Variables | AMD4GRAM (GB) | INTEL 4GRAM (GB) |

AMD2GRAM (GB) | INTEL 2GRAM (GB) |

|---|---|---|---|---|

| Used Memory | 2.3 | 2.3 | 1.7 | 1.3 |

| Available Memory | 0.6 | 1.6 | 0.2 | 0.6 |

| Used Memory | 2.1 | 2.3 | 1.9 | 1.6 |

| Available Memory | 1.7 | 1.7 | 0.5 | 0.3 |

| Used Memory | 2.3 | 0.4 | 1.9 | 0.3 |

| Available Memory | 0.6 | 0.2 | 0.9 | 03 |

| Used Memory | 1.2 | 3.4 | 1.6 | 0.3 |

| Available Memory | 1.5 | 0.2 | 0.2 | 0.2 |

| Used Memory | 1.8 | 1.3 | 1.6 | 1.7 |

| Available Memory | 0.9 | 0.6 | 0.7 | 0.2 |

Table 2: The averages of used and available memory both in Gigabyte for the Intel and AMD boards.

Discussion of Results

Processor load for 4G RAM: Intel had higher processor utilization (load) on the processor than AMD when both of them had no activity going on in the virtual environment.52%: 44%

AMD had higher processor utilization (load) than Intel when a graphical activity was run on it in the virtual system this is because AMD had 63% for while Intel had 57% in the virtual system.

AMD showed higher processor utilization (load) than Intel when the data based was loaded on the systems that is 52% as against 51%.

Memory load for 4G RAM

AMD showed higher memory utilization of about 81% as against that of Intel which was 65% in virtual system.

In the case of graphical activity going on in the systems, Intel showed higher memory utilization of 66% as against that of AMD which was 40%

When database was loaded, Intel showed a higher memory utilization of 68% as against that of AMD which was 66%.

AMD Ram was seen to be better when higher loads are running on the system than Intel. But, when it comes to processor load Intel showed a better efficiency than AMD when higher load was active in them. This means that the RAM of the AMD board which is SD showed better results when it comes to virtualization Than the Intel RAM which was DDR3, while the processor of the Intel showed a better result because of its core ability than the AMD processor. Another discovery is that Ram affects the performances of a system when it comes to virtualization. The higher the RAM the better the result, thus the choice of a good type of RAM is important.

Processor load for 2G RAM

AMD had higher processor utilization (load) of about 72% on the processor than Intel whose load was 52% when no activity was going on in them in the virtual environment.

AMD has a higher processor load (utilization) of about 72% as against Intel that had 53% when both were in the virtual environment.

When database was loaded on them, AMD had a higher load of about 54% while that of Intel was 50%.

Memory load for 2G RAM: AMD had a higher processor load (utilization) of about 69% in the virtual, but Intel had lower load of about 69% in the same virtual environment.

When a graphical movie was set in motion on them. AMD had a higher memory load (utilization) of about 88% in the virtual environment, while Intel showed lower memory utilization (load) of about 84% for the physical and 62% for the virtual environment.

When the database was loaded in them, AMD showed a lower memory (load) of 87% while the Intel system showed a higher load of about 89%.

The higher the RAM the better the performance of AMD system in terms of RAM only.

The lower the RAM the better the performance of Intel than AMD in terms of both the RAM and processor utilization (load).

Results of used memory in gigabyte for 4G RAM

When in the virtual environment, AMD used more memory than Intel 2.3GB: 0.4GB.

When a graphic activity was set in motion on them, we noticed that Intel used more memory than the AMD in the virtual environment (3.4GB: 1.2GB).

The database was also tested on them and we found that AMD used more memory than the Intel system (1.8GB: 1.3GB) respectively.

Available memory in gigabyte for 4G RAM

In the virtual environment AMD had more available memory of 0.6GB while Intel had 0.2 GB only.

When graphical activity was run in them in the virtual environment we noticed that AMD had more available memory of about 1.5 GB while Intel had only 0.2GB memories available.

When the data base was loaded in both systems we notice that AMD had more available memory of about 0.9GB than Intel which had 0.6 GB only.

Used memory in gigabyte for 2G RAM

AMD used more memory than Intel in the virtual environment when tested (1.9 GB: 0.3 GB).

When a graphical activity was set in motion on them, AMD used more memory than Intel in the virtual system (1.6 GB : 0.3 GB)

The database was loaded on them and it was discovered that Intel used more memory of 1.7GB than AMD which used 1.6 GB.

Available memory in gigabyte for 2G RAM

When no activity was going on in the virtual systems, AMD had more available memory of about 0.9 GB while Intel had 0.3 GB.

With graphical movie going on in them in the virtual environment, both had the same available memory of 0.2 GB each.

The database was also tested on them and the result showed that AMD had more available memory of 0.7 GB against Intel that had 0.2GB memory available.

When it came to virtualization (virtual environment) again we noticed that AMD had higher available memory in the virtual environment than Intel as shown in Table 1 above, this is because it uses less memory in the virtual environment and thus has more left.

The number of available resources (CPU and memory) as seen by the VM is not necessarily physically available. It was also noticed that the Guest OS does not get exclusive access to assigned resources, which it doesn’t know. In some cases it was identified that instead of the load on the CPU and memory to increase or decrease as more load is added or reduced as the case maybe, it did not turn out to be so. Hence our suspected errors due to wire and tear could be true. Also we noticed that the AMD memory is giving a better output than that of the Intel, this is because while the Intel system was using DDR3, the AMD was using SD which mean that the model of some Ram work better than others.

Conclusion

Based on the benchmark results there are some general conclusions that can be drawn with regard to how to optimize system performance in a VM. First, one should make sure that the VM has enough virtual RAM for any concurrently running guest OS processes. Secondly, make sure that the amount of virtual RAM required by all concurrently running VMs is less compared to the physical RAM of the machine and leave some for the hypervisor / host OS to use as well.

Lastly, if the application makes heavy use of CPU, then use a system with higher processor than is needed by the application. Virtualization has several advantages over non virtualized systems because of its flexibility, availability, scalability, hardware utilization and security. Based on the findings, we recommend that those who want to venture into virtualization can adopt this testing methodology used in this research work as it is highly efficient, but have it in mind that their system must have a minimum of dual core processor before venturing into virtualization. Applications that consume more processor should use Intel processor. While those that consume more memory, can use SD Ram and assign enough memories to the virtual system (environment) but not more than that of the host system.

References

- William VH (2008) Professional xen virtualization. Update, source code, and wrox technical. John Wiley & Sons, Hoboken, New Jersey, United States.

- Popek GJ, Goldberg RP (1974) Formal requirements for virtualizable third generation architectures association for computing machinery 17: 412-421.

- Jung JS, Bae YM, Soh W (2011) Web performance analysis of open source server virtualization techniques. IJMUE 6: 45-51.

- Ali I, Meghanathan N (2011) Virtual machines and networks – Installation, performance, study, advantages and virtualization options. IJNSA 3: 1- 15.

- Prakash HR, Anala MR, Shobha G (2011) Performance analysis of transport protocol during live migration of virtual machines. Int. j. computer sci. eng. Commun 2: 715-722.

- Praveen G, Vijayrajan P (2011) Analysis of performance in the virtual machines environment IJAST 32: 53-64.

- Singh S, Jangwal T (2012) Cost breakdown of public cloud computing and private cloud computing and security issues. IJCSIT 4: 17-31.

- Berl A, Erol G, Marco DG, Giovanni G, Henmann DM, et al (2010) Energy-efficient cloud computing. Comput. J 53: 1045-1051.

- Bento A, Bento R (2011) Cloud computing: A new phase in information technology management. JITM XXII: 39-46.

- Eric S (2009) Measuring your current performance usage. Inform IT.

- Espen BO (2006) Understanding full virtualization, Para-virtualization, andhardware assisted white paper 9: 1-15.

- Deploying sungard’s Ambit intellisuite in a virtual environment 5: 5-23.

- Eric S (2009) VMware fault tolerance what is it and how it works 6: 1-5.

- Tesi DD (2006) High availability using virtualization. Unpublished PhD thesis 9: 26-32.

- Espen BO (2006) Thesis Management of high availability services using Virtualization. Master thesis. Espen Braastad Oslo University College.

Spanish

Spanish  Chinese

Chinese  Russian

Russian  German

German  French

French  Japanese

Japanese  Portuguese

Portuguese  Hindi

Hindi