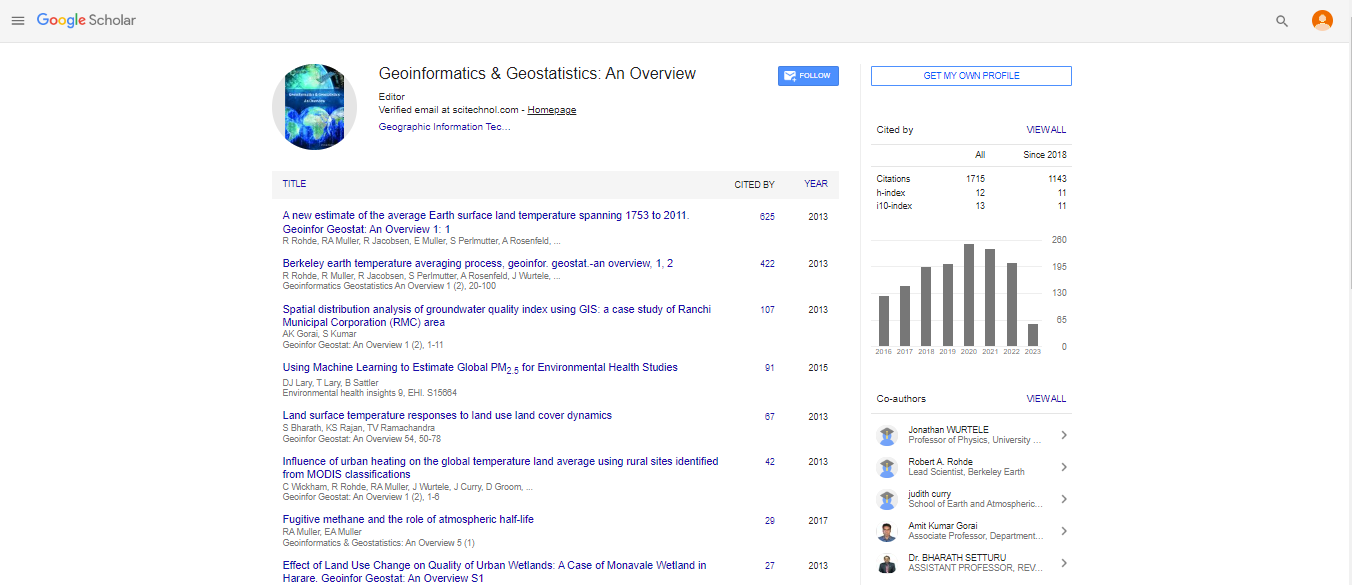

Research Article, Geoinfor Geostat An Overview Vol: 1 Issue: 2

Berkeley Earth Temperature Averaging Process

| Robert Rohde1, Richard Muller1,2,3*, Robert Jacobsen2,3, Saul Perlmutter2,3, Arthur Rosenfeld2,3, Jonathan Wurtele2,3, Judith Curry4, Charlotte Wickham5 and Steven Mosher1 | |

| 1Berkeley Earth Surface Temperature Project, Novim Group, USA | |

| 2University of California, Berkeley, USA | |

| 3Lawrence Berkeley National Laboratory, USA | |

| 4Georgia Institute of Technology, Atlanta, GA 30332, USA | |

| 5Oregon State University, Jefferson Way, Corvallis, USA | |

| Corresponding author : Richard A. Muller Berkeley Earth Surface Temperature Project, 2831 Garber St., Berkeley CA 94705, USA Tel: 510 735 6877 E-mail: RAMuller@LBL.gov |

|

| Received: January 22, 2013 Accepted: February 27, 2013 Published: March 05, 2013 | |

| Citation: Rohde R, Muller R, Jacobsen R, Perlmutter S, Rosenfeld A, et al. (2013) Berkeley Earth Temperature Averaging Process. Geoinfor Geostat: An Overview 1:2. doi:10.4172/2327-4581.1000103 |

Abstract

Berkeley Earth Temperature Averaging Process

A new mathematical framework is presented for producing maps and large-scale averages of temperature changes from weather station thermometer data for the purposes of climate analysis. The method allows inclusion of short and discontinuous temperature records, so nearly all digitally archived thermometer data can be used. The framework uses the statistical method known as Kriging to interpolate data from stations to arbitrary locations on the Earth.

Spanish

Spanish  Chinese

Chinese  Russian

Russian  German

German  French

French  Japanese

Japanese  Portuguese

Portuguese  Hindi

Hindi