Review Article, J Forensic Toxicol Pharmacol Vol: 6 Issue: 1

Mathematical Models Employed to Predict the Timeframe of Intoxications as Interpretation Tools in Forensic Cases

Quijano-Mateos A, Castillo-AlanÃs LA and Bravo-Gómez ME*

Forensic Science Department, School of Medicine, National Autonomous University of Mexico, Av. Universidad 3000, ZC 04510, Mexico City, Mexico

*Corresponding Author : Bravo-Gómez ME

Forensic Science Department, School of Medicine, National Autonomous University of Mexico. Av. Universidad 3000, ZC 04510, Mexico City, Mexico

Tel: +(52)-5556232300 extn. 81916

E-mail: mebravo@unam.mx

Received: June 19, 2017 Accepted: July 13, 2017 Published: July 21, 2017

Citation: Quijano-Mateos A, Castillo-AlanÃs LA, Bravo-Gómez ME (2017) Mathematical Models Employed to Predict the Timeframe of Intoxications as Interpretation Tools in Forensic Cases. J Forensic Toxicol Pharmacol 6:1. doi: 10.4172/2325-9841.1000153

Abstract

Toxicological analysis interpretation is a complex task where estimating the time elapsed since a drug was last consumed or the chronicity of consumption provides crucial information in forensic cases i.e. driving under the influence of drugs (DUID). This review focuses on the description of some strategies reported in the literature that contribute to such estimations.

Keywords: Intoxication timeframe; Drug pharmacokinetics; Mathematical models; Forensic toxicology interpretation; Drug use estimation; Drug use differentiation; Backtracking; Pharmacokinetic prediction

Introduction

According to The International Association of Forensics Toxicologists (TIAFT), toxicological analysis is one of the basic tasks in forensic investigations [1]. The activities related to forensic toxicology include the detection, identification and quantification of substances of forensic interest, and the interpretation of results. The latter is linked to the evidentiary purpose; that is, which are the questions directing the analysis and which is the intended purpose of the evidence. Relevant questions in the forensic context are numerous and complex, for example:

• Was the amount of substance found sufficient to cause death?

• Was the person under the influence of drugs at the time of the criminal event?

• The resulting substance concentration in the sample corresponds to an acute or a chronic exposure?

• When did the user last consume this drug?

Estimating the duration for which a person has been exposed to a substance or the time elapsed since a substance was last consumed provides crucial information for the toxicological exam interpretation, especially in cases related to environmental crime, poisonings, and psychopharmacological crimes. Psychopharmacological crimes are defined as those crimes committed under the influence of a psychoactive substance as a result of acute or chronic consumption; i.e. those resulting from the consumption of specific substances that stimulate actions or promote circumstances resulting in crime [2].

The attribution of a crime to the psychopharmacological effect of a substance is difficult to assign due to the large inter-individual variability in both the response to the substance and its toxicity, and concentrations following administration of the same dose. These toxicokinetic, and often toxicodynamic, differences are determined genetically and are epigenetically modulated. These differences are attributable to the different routes of administration, the administered dose, and the metabolic tolerance or clearance developed by chronic users. Therefore, the interpretation of toxicological findings in the forensic area is a very important task that requires knowledge of many aspects of analytical toxicology, toxicokinetics, organ toxicity, and the factors affecting them [3].

Due to the complexity in toxicological interpretation required for forensic purposes, toxicologists are interested in having tools such as mathematical models where drug or metabolite concentrations in a biological sample can be used to distinguish acute from chronic consumption and/or to accurately establish the timeframe of intoxication. Some of the strategies which contribute to such tools are described below.

Methods to Distinguish Acute and Chronic Exposures

The elimination time of illicit drugs and their metabolites is of both clinical and forensic interest. Depending upon specific situations, clinical results and legal consequences for a person will be different depending upon the results of toxicological tests. In a forensic context, it is relevant to know if a given subject is under the influence of a drug or not. For example, the legal consequences associated with a car crash will be much more severe for a person who was driving under the influence of drugs than if completely/legally sober. Another example are the prescribed drugs, in some cases, subjects who have taken drugs by physician´s directions cannot be prosecuted unless it is proven that they had overdosed the medication. A complex scenario is presented in the prosecution of chronic users; in some cases, the prosecuted might get a different sentence if addiction or dependence is proved. A positive toxicological test might not necessarily be related to acute intoxication, especially when it comes to the abuse of lipophilic drugs where users might test positive even after several weeks of abstinence (i.e. cannabinoids[4]), and in the cases of chronic consumption, the drug’s pharmacokinetics might be altered.

Accordingly, the first step towards identifying an acute intoxication is to fully understand the elimination kinetics of the parent drug and/ or its metabolites. Understanding how a substance biotransformed and removed from the body can result mathematical relationships i.e. ratios of concentrations of xenobiotic (P) and its metabolites (M) or P/M ratios of concentrations in different biological matrices, which in theory can differentiate between a recent exposure from an earlier one. For example, benzodiazepines, especially diazepam, commonly found in drugged drivers, is metabolized via CYP2C19 to desmethyldiazepam; a high P/M ratio between diazepam and its metabolite indicates an acute intake [3].

Controlled administration studies

A popular approach to profiling drug and metabolite elimination patterns consists of a controlled administration of drugs to volunteers followed by monitoring of drug and its metabolites in urine and other samples like oral fluid, whole blood, and plasma.

The most representative studies where analysis of biological matrices follows the controlled administration of some abuse drugs have been focused on cannabis, MDMA and amphetamines, cocaine, heroin and opiates and ethyl alcohol, and are described below.

Cannabis

Cannabinoids, Δ(9)-tetrahydrocannabinol (THC) and their metabolites are highly lipophilic structures, so they tend to concentrate and have prolonged elimination time, accumulating over time in lipid tissue [4,5]. Slow release of the drug from fat and significant enterohepatic circulation contribute to THC’s long terminal elimination half-life in plasma, reported as greater than 4.1 days in chronic marijuana users [6]. After the initial distribution phase, the rate-limiting step in the elimination of THC is its redistribution from lipid depots to blood [7,8]. Consequently, to differentiate a recent use from residual excretion is necessary if the probative interest demands it, i.e. detoxification patients or judicial programs in some countries that routinely collect urine from individuals on parole that were ordered to attend a rehabilitation program.

One of the most comprehensive studies to differentiate the recent use of Cannabinoids was conducted by Anizan et al. [9]. The body fluids (blood, urine and oral fluid) of frequent and occasional consumers were monitored from 19 h before to 30 h after smoking a 6.8% THC cannabis cigarette. Concentrations of Δ(9)-Tetrahydrocannabinol (THC), 11-hydroxy-THC (11-OH-THC), 11-nor-9-carboxy-THC (THCCOOH), cannabidiol (CBD), cannabinol (CBN), THCglucuronide, and 11-nor-9-carboxy-THC-glucuronide (THCCOOglucuronide) were compared, when possible, in different matrices. Usually, detection of a drug in oral fluid means a recent consumption; however, the usefulness of this biological matrix is limited in time, as the substance disappears quickly. In this study, the parent THC and metabolites like THCCOOH, CBD, and CBN and their proportion to THC where quantified by 2D-GC-MS. Results showed that THCCOOH in oral fluid could indicate oral administration of cannabis and that time detection extension could help distinguish chronic form occasional smokers [9].

Regarding blood and plasma samples, distinguishing recent use of cannabinoids is a more difficult task. The concentrations of THC and 11-OH-THC are significantly higher in frequent smokers compared with occasional smokers at most sampling times and at all sampling times for THCCOOH and THCCOO-glucuronide. It has been suggested that the presence of CBD, CBN, or THC-glucuronide could indicate recent use, but their absence does not exclude it [10]. Toennes et al. [11] studied pharmacokinetic properties of THC in occasional and heavy users in cannabis and placebo conditions, from the results, it must be noted that cannabinoid (parent drug and metabolites) blood concentrations from heavy users in a late elimination phase may be difficult to distinguish from concentrations measured in occasional users after acute cannabis use [11]. Despite the extensive work, differentiating occasional from frequent users relies mainly on time detection of the same markers, which makes an accurate interpretation for forensic purposes difficult to achieve.

Regarding urine samples, extended urinary THC excretion in chronic cannabis users precludes its use as a biomarker of new drug exposure, so important efforts have been performed by some authors to select more suitable metabolites to identify recent use. In a sequential series of urine specimens from individuals who abstained from intake of cannabis, there can occasionally be urine specimens that have higher concentrations of THCCOOH than previous samples [12-14]. This could be a result of residual excretion of drug that has been stored in the body following chronic cannabinoid use. Most of these increases in concentration appear to be related to individuals’ hydration states that are determined by fluid intake, environmental temperature, levels of activity, disease states, and a multitude of other variables. Manno et al [14] first suggested that urinary THCCOOH could be normalized to urinary creatinine concentration to account for specimen dilution [14], this normalizing also makes the excretion pattern more predictable. They recommended a quotient cutoff of ≥1.5 to identify new drug use. However, Huestis and Cone found that the greatest accuracy (85.4%) in predicting new cannabis use is achieved when paired specimens collected at least 24 hours apart had a quotient of ≥0.5 for the [THCCOOH]/[creatinine] in specimen 2 divided by the [THCCOOH]/[creatinine] for specimen 1[12]. Fraser and Worth compared both criteria for new use in a group of 26 chronic marijuana users and they found a false-negative rate of 7.4% with the Huestis guideline and 24% with the Manno rule [15] They extended the study to include 37 chronic marijuana users with at least 48 hours between specimens; with the >0.5 cutoff, new drug use was identified in 80– 85% of cases [16].

Also, other metabolites which are not routinely measured as 11-OH-Δ9THC [17] and glucuronides [18] have been studied as biomarkers of recent use of cannabinoids. In the latter study THCCOOH, THC-glucuronide, and THCCOOH-glucuronide were quantified by liquid chromatography-tandem mass spectrometry within 24 h of collection. All analytes were measurable in all frequent smokers’ urine and in some of the occasional smokers’ urine samples [18]; An absolute percentage difference of ≥50% between 2 consecutive THC-glucuronide-positive samples with a creatininenormalized concentration of ≥2 μg/g in the first sample predicted the type of cannabis smoking with efficiencies of 93.1% in frequent and 76.9% in occasional smokers within 6 h of first sample collection, so this consideration provides a possible means of identifying recent cannabis intake in cannabis smokers’ urine within a short collection time frame after smoking [18].

MDMA

MDMA, or ecstasy, is excreted as unchanged drug, 3,4-methylenedioxyamphetamine (MDA), and free and glucuronidated/ sulfated 4-hydroxy-3-methoxymethamphetamine (HMMA), and 4-hydroxy-3-methoxyamphetamine (HMA) metabolites. MDMA and Methylenedioxy derivatives undergo a more extensive metabolism than the prototype compounds (amphetamines), and the amount of unaltered drug excreted in urine is usually smaller. Comparison of renal and total clearance of MDMA shows that about 80% of the drug is cleared metabolically through the liver, and about 20% of the dose is excreted unaltered in urine. A similar recovery has been reported for 3,4-dihydroxymetamphetamine (HHMA). Higher recovery rates have been detected for 4-hydroxy-3-methoxymetamphetamine (HMMA), the main metabolite found in urine (>20%), with less than 2% of the dose excreted as MDA [19–21]. The elimination half-life of MDMA, is in the range of 6–9 hours [19,22], is lower than those reported for amphetamine and metamphetamine. Due the speed of the elimination, some strategies have been developed in order to find biomarkers of consumption detectable for longer time.

Abrahams et al. [23] aimed to describe the pattern and timeframe of excretion of MDMA and its metabolites in urine. Placebo, 1.0 mg/kg, and 1.6 mg/kg oral MDMA doses were administered double-blind to healthy adult MDMA users on a monitored research unit. HMMA last detection exceeded MDMA’s by more than 33 h after administration; Identification of HMMA as well as MDMA increased the ability to identify positive specimens but required hydrolysis. Measurement of urinary HMMA provides the longest detection of MDMA exposure yet is not included in routine monitoring procedures [23].

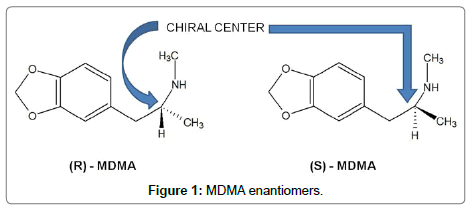

Another strategy is based on its stereoselective metabolism. MDMA has a chiral centre at the α-carbon (Figure 1) with a pair of enantiomers [24], although the racemic mixture is usually consumed.

The enantiomers may show different pharmacological activity and body disposition [25]. The (S)-isomers of 3,4-methylenedioxymetamfetamine (MDMA) is responsible for the psychostimulant and entactogenic activities as compared with the hallucinogenic properties of the (R)-isomers [26]. Stereoselective weight, a low protein binding (around 20%) and a metabolism for MDMA [27] has been reported. The (R)- and (S)-enantiomers of racemic MDMA exhibit different dose-concentration curves. In plasma, S-MDMA is eliminated at a higher rate, most likely due to stereoselective metabolism [28], the more active S-enantiomer had a reduced Area Under the Curve (AUC) and shorter half-life than (R)-MDMA [29]. In general, all metabolites (Phase I and Phase II) exhibited changes in enantiomeric disposition over time. Schwaninger et al. [28] characterized metabolites enantiomeric disposition with a study of controlled administration scheme. MDA, HMMA, DHMA sulfate, and HMMA glucuronide were mainly excreted as (S)-enantiomers within the first 24 h after ingestion, but higher percentages of (R)-enantiomers were noted after 24 h. Concerning the time of first detection, no differences were observed for the two enantiomers, but generally, detectability tended to be longer for (R)-enantiomers, although for MDA, DHMA and HMMA no significant differences were observed. Pizarro et al. [30] reported similar findings concerning MDA metabolite, the initial R/S ratios is <1, but total urinary excretion over 72 h is comparable when (R)- and (S)-enantiomers amounts are compared [30]. Furthermore, R/S HMMA ratios determined after conjugate cleavage were about 1 after 24 h, and about 2 in total [30]. R/S ratios over time shown low intersubject variability and a dependence on time after ingestion, but not on MDMA doses administered, accordingly proper cut-off R/S values may distinguish between recent (within 24 h) MDMA consumption and earlier ingestion. R/S ratios for DHMA and HMMA, as only minor metabolites, were generally detectable only in the first 24 h after ingestion, thereby providing further indication of recent MDMA consumption [28].

Cocaine

Cocaine (COC), a psychoactive drug from the coca leaf, is the second-most identified drug of abuse in drug-testing programs. Cocaine is taken for its intense euphoric and stimulatory effects, despite well-documented cardiotoxic effects [31]. This compound is primarily metabolized by the liver to two major, inactive metabolites: benzoylecgonine (BE) and ecgonine methyl ester (EME) [32]. Immunoassays to detect cocaine are targeted against the metabolite benzoylecgonine and use a cut-off of 300 ng/mL. An intravenous dose of 20 mg cocaine can be detected for 1.5 days. Street doses (administered via different routes) are detectable up to 1 week, and extremely high doses up to 3 weeks [33].

Cone et al. [34] reported the urinary excretion patterns and terminal elimination kinetics for cocaine (COC) and 8 metabolites: benzoylecgonine (BE), ecgonine methylester (EME), norcocaine (NCOC), benzoylnorecgonine (BNE), m-hydroxy-BE (m-HO-BE), p-hydroxy-BE (p-HO-BE), m-hydroxy-COC (m-HO-COC), and p-hydroxy-COC (p-HO-COC); following approximately equipotent doses of cocaine by the intravenous (IV), smoking (SM), and inhalation (IN) routes of administration. m-HO-BE demonstrated the longest half-life (mean range 7.0-8.9 h), and cocaine displayed the shortest (2.4-4.0 h) [34]. Klette et al. [35] proposed measurement of nonhydrolytic cocaine metabolites (m-HO-BE, p-HO-BE, and BE) in urine as a means of differentiation between adulteration or “doping†of urine specimens and true cocaine exposure [35]. These metabolites exhibit the longest half-lives and are frequently detected along with BE, although at lower concentrations and consequently shorter detection times [34]. Isenschmid et al. [36] studied the plasma from 10 human subjects at various intervals after administration of two rapid doses of cocaine (COC), either intravenously or by smoking, and multiple doses by smoking and intravenously confirming that BE is the main metabolite of COC in blood; EME, when present, did not exceed 5% of the BE concentration. They concluded that EME arises primarily as a result of non-metabolic (in vitro) hydrolysis of COC in blood. The presence of significant quantities of EME in urine is probably due to accumulation [36]. Consequently, detection of metabolites produced only by oxidative metabolic pathways (m-HOBE, p-HO-BE, and BNE) and not by hydrolysis, would serve as irrefutable evidence that cocaine had been consumed recently by an individual [34], dismissing the adulteration of the sample and errors associated with non-enzymatic hydrolysis.

Jufer et al. [37] investigated the effects of chronic oral cocaine administration in healthy volunteer subjects with a history of cocaine abuse. Subjects were housed on a closed clinical ward and were administered oral cocaine in up to 16 daily sessions. Plasma and saliva specimens were collected periodically during the dosing sessions and during the one-week withdrawal phase at the end of the study. Two phases of urinary elimination of cocaine and metabolites were observed. An initial elimination phase was observed during withdrawal that was similar to the elimination pattern observed after acute dosing. A terminal elimination phase was also observed for cocaine metabolites which suggests that cocaine accumulates in the body with chronic use resulting in a prolonged terminal elimination phase for cocaine and metabolites [37]. Since analysis of urine matrix is not able to distinguish from cocaine urinary elimination accumulated in body as result of chronic use, another approach related with the use of an alternative matrix as oral fluid has been proposed to overcome this problem.

Oral fluid is an attractive alternative matrix for drug testing, with a non-invasive and directly observed collection, but there are few controlled cocaine administration studies to guide interpretation. Scheidweiler et al. [38] observed that BE:COC and EME:COC ratios in oral fluid generally increased over time, indicating that a specimen containing high concentrations of cocaine relative to BE and EME suggests recent cocaine use [38].

Heroin and opiates

Smith et al. [39] reported a comprehensive examination of pharmacokinetics, pharmacodynamics, detection times, opiate immunoassay performance, and urine excretion profiles following single doses of heroin administered to human subjects via smoking and intravenous routes. The parameters studied were not significantly dependent on route of administration [39]. Beck et al. [40] evaluated the usefulness of the heroin metabolite 6-acetylmorphine (6-AM) as the primary analytical target in combination with morphine in the screening assay. Urine samples from patients undergoing heroin substitution treatment were investigated for 6-AM and opiates. By comparing 6-AM and opiate screening results at different cut-off levels, it was observed that 7-8% of the samples and 12.5% of the patients with detectable 6-AM had an unexpected low content of free and total morphine in the urine. It was concluded that 6-AM is a valuable target analyte in the screening of drugs of abuse in urine and may be used in combination with opiate screening in clinical testing [40]. On the same line of work, Cone et al. [41] reported the urinary excretion patterns of 6-acetylmorphine (6-AM), free morphine, and total morphine for six human subjects who received single doses of 3.0 and 6.0 mg of heroin hydrochloride. Following heroin administration, 6-AM was excreted rapidly with a range of 2-8 h at the most sensitive cut-off limit, this short detection time limits the usefulness of 6-AM as a marker for identification of heroin abusers to a period immediately after drug use. After morphine and codeine administration, no 6-AM was detected by GC/MS above the 0.81-ng/mL detection limit of the assay so the presence of 6-AM in urine can be interpreted with confidence to mean that heroin, or 6-AM, was administered within 24 h of specimen collection [41]. Different from heroin abuse, Oyler et al. [42] investigated the occurrence of hydrocodone excretion in urine specimens of subjects who were administered codeine. Confirmation of hydrocodone in a urine specimen was always accompanied by codeine detection, confirming that hydrocodone can be produced as a minor metabolite of codeine in humans and may be excreted in urine [42]. Further studies to differentiate hydrocodone and codeine recent consumption are needed.

Ethyl alcohol

Ethyl alcohol is a legal and socially accepted recreational drug. Its abuse may cause numerous problems for the individual and society. Casualties of car accidents caused by drunk drivers, aggressive behavior, family problems and decrease of effective work are the main problems connected with alcohol abuse. Alcoholism is one of the most frequent dependences among people. The early detection of alcohol abuse could allow for faster introduction of treatment, and this makes diagnostics essential to problem solving. The easiest and most effective way of proving recent alcohol consumption is confirming its presence in biological samples taken from the individual. However, the main disadvantage of this method is the short window detection for ethanol, because of its high speed of elimination process. Many biochemical laboratory markers of low consumption, abuse or alcohol dependence have been introduced for several last years. However, their clinical utility is limited by half-life in biological fluids [43]. Nowadays, in order to prevent and have a better control of alcohol abuse, markers that could provide a better view of short and long term ethanol consumption are in frequent use. Ethyl alcohol in the body causes many qualitative and quantitative disturbances in biochemical metabolites that could be used as markers of its consumption. Cylwik et al. [43] explored the usefulness of non-oxidative metabolites of ethanol as a new markers of recent alcohol consumption, such as: fatty acid ethyl esters (FAEEs), ethyl glucuronide (EtG), ethyl sulphate (EtS) and phosphatidylo ethanol (PEth).The diagnostic value of those markers for detection of recent consumption seems to be higher than commonly used laboratory tests because the time of detection in biological fluids occur between that of the short-term (ethanol, methanol, HTOL/ HIAA index) and long-term markers (CDT, GGT MCV), that is, between one day and one week [43] Sulfate conjugation is a normal but minor metabolic pathway for ethanol in humans, and EtS a common constituent in the urine after alcohol intake. It is also indicated that the concurrent determination of EtS and EtG will improve sensitivity, when being used as biomarkers of recent drinking [44]. Additionally, because EtG and FAEEs can be detected in hair for months, they can be used as an indicators of chronically harmful alcohol consumption [45].

Monitored abstinence studies

Another approach consists of quantification of parent drugs and/or their metabolites during monitored abstinence of volunteers. Although the windows for detection are described for cannabis in oral fluid [46–48], urine [48–50], and blood [48,51]; for cocaine in plasma and saliva [52], and in urine [50,53]; and for heroin and barbiturates in urine [50]; the differentiation between an occasional and a chronic user cannot be noticed, nor is there a model for elimination prediction.

Although the studies made with either approach provide useful information, the variable control is less than perfect as samples might come from both occasional and frequent users, as well as polydrug users [50].

Methods to Estimate the Timeframe of Drug Use and Backtracking Calculation

Once the use of a drug has been established, another concern for the forensic toxicologist is estimating time interval elapsed since the last drug use and the time of sample collection. Knowing the timeframe of drug use and an accurate estimation of the time elapsed since the last drug use is important in the studies related to clinical trials, road accidents [54], and human performance evaluation [55], among others. Back-calculation requires knowledge of the pharmacology of the relevant substance, particularly its pharmacokinetics. In some cases, this time lapse can be calculated considering pharmacokinetic parameters [56]. To rightfully prove the use or influence of a drug in human performance, toxicologists must keep in mind that the halflife of some analytes can be very short depending upon the matrix tested for it. For example, if a sample of urine is tested for a specific drug or metabolite, then the analyst should consider its elimination ratio in the same matrix. On the other hand, the toxicologist should be aware that the identification of a substance does not necessarily mean recent drug use or influence in human performance, i.e. Niedbala et al. [57] reported that two subjects who did not smoke cannabis but were in the room when others smoked had some positive screening but no confirmed oral fluid cannabinoid tests [57], however, data in the literature is limited.

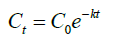

The simplest methods for backtracking calculations are derived from basics pharmacokinetics. After administration, the concentration of drug in blood rises to a maximum (Cmax) during the absorption phase and begins to decline during the distribution and elimination processes, usually adjusting to an exponential curve that relates plasma concentration and time. If the details of the curve are known, it is possible to predict drug concentrations at any point on the curve. However, calculations are often restricted to the elimination phase unless details of dose and time of administration are known. Some information is needed to allow the curve to be defined and difficulties arise if insufficient information is available. Typically, all that is known is the concentration of the drug of interest measured in a blood specimen at a given time. The shape of the curve in the elimination phase can adopt a variety of forms, depending on the dose of substance taken and its distribution and metabolism. For most drugs, the rate of elimination depends on the concentration of drug present and a constant proportion of the drug is eliminated in a given time interval and the curve is exponential (Equation 1).

Where: Ct is the drug concentration at time t; C0 is the theoretical drug concentration, which would be obtained if the drug had been administered at time t=0 and distributed immediately around the blood in circulation; k is the elimination rate constant

However, for a few drugs given in high doses, including alcohol, a constant amount of drug is eliminated per unit of time and the curve is effectively linear allowing the simplest backtracking calculation [56].

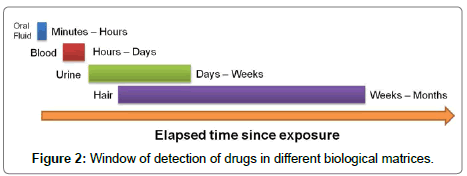

In some cases, the elimination process is advanced and the drug or even its metabolites in other matrices should be considered for estimating the temporality. Elimination kinetics of a substance allows inferring the type of exposure through the analysis of concentrations in different biological matrices, considering that each biological matrix has some utility range resulted of half life of each substance and its metabolites. This is a very simple approach to discern the time that has elapsed in some exhibitions. It is generally accepted that the detection time is longest in hair, followed by urine, sweat, oral fluid, and blood (Figure 2). Windows of detection depend mainly upon the dose administered, the route of administration, the frequency of drug use, interindividual metabolic and renal clearing variation, the sample matrix, its pH and concentration (urine, oral fluid), the analyte to be determined, as well as the sensibility on the analytical method and the sample preparation [33]. In blood or plasma, most drugs of abuse can be detected at ng/mL levels for 1 or 2 days. In urine, the detection time of a single dose is 1.5 to 4 days; however, in chronic users, drugs of abuse can be detected in urine for approximately 1 week after last use depending upon the drug, and in extreme cases even longer in cocaine and cannabis users. In oral fluid, drugs of abuse can be detected for 5-48 hours at after use at ng/mL levels [55].

The right conclusions when interpreting urine drug tests are derived from appropriate, thorough and cautious testing. For example, when two or more consecutive urine samples coming from the same individual are positive, it’s crucial to correctly identify if the individual has taken the drug in-between the sampling times [55]. The window of detection of drugs in urine is essential, as well as for other matrices. However, sometimes a greater accuracy to determine the time of drug influence is necessary. It’s possible to get better time predictions through the interpolation of the analytes concentrations in different matrices [58].

The interpretation of the results of toxicological tests and the estimation of the temporality of intoxication can be improved if they consider more specific pharmacokinetic or physicochemical properties of each drug in order to develop models and approaches. Studies have been made regarding detection time for drugs of abuse by means of controlled administration in volunteers going through detoxification, frequently with chronic administration [55]. Such experimental models developed to calculate the time frame of intoxication for several drugs are presented below.

Cannabis

Currently, studies aim to evaluate impairment through the estimation of the timeframe of drug use and the relation of pharmacokinetic parameters [59]. Unlike in the case of alcohol use, cognitive impairment due to cannabis use is not easily related to plasmatic concentrations of THC or its metabolites. This phenomenon is due to the fact that the maximum effects can occur at times different from maximum plasmatic concentration. The effects on the brain occur while the concentration of the active analyte decreases. This process is called hysteresis [60]. Sites of action (receptors CB1 and CB2) are often within the brain or peripheral nerve tissues, and it is important to understand the processes and time frames for the drugs to reach and leave these sites. Pharmacokinetic and pharmacodynamic studies have allowed establishing a relation between blood THC and its metabolites concentrations. THC is absorbed and distributed quickly to the tissues; once the blood/tissue equilibrium is achieved; a direct correlation between blood THC and its effect is observed. It is also worth mentioning that THC, like other lipophilic drugs, can accumulate in fatty tissue and thus, becomes detectable in urine for months after its last use; hence, distinguishing a recent becomes a challenging task.

Many studies have found a relationship between cannabis dose and performance impairment including impaired coordination, tracking, perception, vigilance, impaired perceptual motor speed, accuracy, and multitasking, all important requirements for safe driving [61–64]. Evidence suggests that recent smoking and/ or blood THC concentrations 2–5 ng/mL are associated with substantial driving impairment, particularly in occasional smokers. [62]. However, most of these studies did not attempt to correlate plasma or blood THC concentrations with observed effects but demonstrated that impairment depends upon the time after use, with most subjects showing no impairment 24 hours after administration. Few studies have focused on the estimation of the last drug use. There are 2 reported models to estimate the last cannabis use by means of cannabinoid plasmatic concentrations determination. One predictive model uses the plasmatic concentration of THC, while the second model is based on the ratio of THCCOOH/THC concentrations [60,65]. Both Models were found to predict the time of last use in about 90% of cases. These mathematical models were further evaluated in other controlled drug administration study to extend the validation by use of a large number of plasma samples collected after administration of single and multiple doses of THC and to examine the effectiveness of the models at low plasma cannabinoid concentrations. Plasmatic THC and THCCOOH were determined with GC/MS and predicted times of cannabis smoking, based on each model, were compared with actual smoking times. The most accurate approach applied a combination of the models [54]. The models provide an objective and validated method for assessing the contribution of cannabis to accidents or clinical symptoms.

Another attempt for developing a predictive mathematical model calculating time of last exposure from whole blood concentrations typically employ a theoretical 0.5 whole blood-to-plasma (WB/P) ratio. No studies previously evaluated predictive models utilizing empirically-derived WB/P ratios, or whole blood cannabinoid pharmacokinetics after subchronic THC dosing. Ten male chronic, daily cannabis smokers received escalating around-the-clock oral THC (40-120 mg daily) for 8 days. Cannabinoids were quantified in whole blood and plasma by two-dimensional gas chromatography-mass spectrometry. Predictive models estimating time since last cannabis intake from whole blood and plasma cannabinoid concentrations were inaccurate during abstinence, but highly accurate during active THC dosing. THC redistribution from large cannabinoid body stores and high circulating THCCOOH concentrations create different pharmacokinetic profiles than those in less than daily cannabis smokers that were used to derive the models. Thus, the models do not accurately predict time of last THC intake in individuals consuming THC daily [66].

MDMA

Literature has reported that MDMA undergoes extensive enantioselective disposition in humans. There are 2 reported models to estimate the last MDMA use, both are based on the enantioselective disposition of MDMA and its metabolites; one employs the plasmatic concentrations and the other one urinary concentrations. The first one was reported by Fallon et al. [29] who studied the enantioselective disposition of MDMA and MDA following oral administration of racemic MDMA (40 mg) to eight male volunteers. The result was a predictive model (Equation 2) using either total or individual enantiomer drug plasma concentrations. This model was tested using multiple regression analysis. The model was fitted using the natural logarithms of the enantiomer concentrations as the predictors yielding results highly significant [F = 220 (df = 2,42), P<0.00005] and gave a further improved r2 value of 0.913. As well as being highly significant overall (P<0.00005), each coefficient was also significant to the model (P<0.01), i.e., both enantiomer concentrations were required. The residuals of the model were examined to check the assumptions of regression analysis, which were reasonably satisfied [29].

Mathematical modeling of plasma enantiomeric composition versus sampling time demonstrated the applicability of using stereochemical data for the prediction of time elapsed after drug administration. The model is accurate enough to predict within a 6-h range. However, they conclude that future experiments involving more subjects and more frequent sampling may allow refinement of the model and sharper predictions [29].

t=13.17 ln [(R) – MDMA] - 12.78 ln [(S) – MDMA] - 5.12

Where: Time in hours post drug ingestion (t), where (R)-, (S)-, and (R,S)-MDMA are the concentrations of the individual enantiomers and total MDMA in plasma, respectively proposed by Fallon et al [29]

The second model was reported by Schwaninger et al. [28] after a controlled study characterizing enantiomer disposition in urine. They employed the R/S ratio concentrations to predict time elapsed since consumption, the following R/S cut-offs were selected to divide data into time of ingestion earlier or later than 24 h: R/S of 2 for MDMA, HMMA sulfate, and HMMA glucuronide and R/S of 1 for MDA, HMMA, and DHMA sulfate. Percentages of samples fitted correctly to these groups at a chosen R/S ratio were 87% (MDMA), 91% (MDA), 93% (HMMA), 91% (DHMA sulfate), 96% (HMMA sulfate), and 96% (HMMA glucuronide). Changes in R/S ratios could be observed over time for all analytes, with steady increases in the first 48 h. R/S ratios could help to roughly estimate time of MDMA ingestion and therefore, improve interpretation of MDMA and metabolite urinary concentrations in clinical and forensic toxicology. This model is less accurate than the previous one with plasma concentrations because employs cut-offs instead of mathematical equations; however significantly improves urinary MDMA and metabolite interpretation in clinical and forensic toxicology [28].

Cocaine

Some reports in literature suggest that metabolite:cocaine ratios could prove useful for estimating time of last use for intravenous, intranasal, smoked and subcutaneous cocaine usage [37,67,68]. After a controlled subcutaneous cocaine administration Scheidweiler et al. [28] found that metabolite:cocaine ratios increased after cocaine administration, this trend is potentially helpful for interpreting time of last use. BE:COC and EME:COC ratios generally increased over time, indicating that a specimen containing high concentrations of cocaine relative to BE and EME suggests recent cocaine use. Although, multiple dosing or binge use may produce results differing from single administration studies [38]. Comparison of cocaine, BE and EME concentrations in different matrix may also be useful; generally, median cocaine and EME oral fluid to plasma (S/P) ratios were greater than one from 0.5–24h after both doses, while BE S/P ratios were typically less than one. Although the S/P ratios are highly variable between subjects following subcutaneous cocaine [38].

Opioids

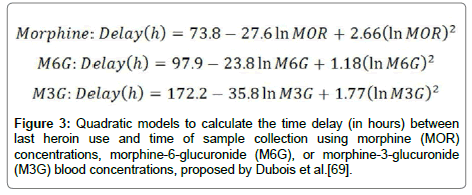

A model to estimate the time interval elapsed since the last use of heroin and blood collection has been reported by Dubois et al. [69]. That study was conducted on 11 patients, all of them heroin users and undergoing detoxification. Several plasma samples were taken during the detoxification process and heroin metabolites like 6-acetylmorphine (6AM), morphine (MOR), morphine-6-glucuronide (M6G) and morphine-3- glucuronide (M3G) concentrations were determined by UHPLC-MS-MS. Quadratic equations for all metabolites were established except for 6AM (Figure 3). The time elapsed since the last heroin use and sample collection in chronic drug users might be satisfactory for heroin metabolites prediction in plasma. The mathematical equations to model the temporal course of MOR, M6G and M3G after heroin inhalation were derived from the data related to the times stated by the 11 patients undergoing Rapid Opioid Detoxification under Anesthesia (RODA). These models were used to estimate the last heroin used, however, the reliability of the equations was also evaluated in the same patients.

Figure 3: Quadratic models to calculate the time delay (in hours) between last heroin use and time of sample collection using morphine (MOR) concentrations, morphine-6-glucuronide (M6G), or morphine-3-glucuronide (M3G) blood concentrations, proposed by Dubois et al.[69].

A mayor issue with this study is that the model only works for chronic heroin users. The equations involving MOR, M6G and M3G concentrations contemplate residual metabolites due to prior heroin use [69].

Discussion and Conclusion

Analysis of the results of toxicological tests is a delicate task, where the interpretation of substances (drugs and/or metabolites) concentrations in body fluids is clearly directed by the attorney’s questions. This article focuses on the review of some strategies developed to distinguish recent consumption from chronic consumption and how to calculate the time elapsed from consumption to the time of the event being investigated.

Identifying and quantifying a substance in a particular biological sample does not necessarily mean that it can be directly linked to a crime. In some cases, it’s necessary to estimate the last time when the substance was consumed and when did the maximum plasmatic concentrations take place. These time facts can be better correlated to determine psychomotor functions impairment in forensic investigations related to DUID cases or in clinical evaluations. For substances like amphetamine, there is a positive relationship between blood amphetamines concentrations and impairment [70], however the onset of impairing effects of THC lags behind the increase in plasma concentration during absorption; thus, the effects remain relatively constant as the concentration decreases dramatically because of THC distribution and metabolism (hysteresis) [71]. Besides, the interpretation of such information becomes complex due to the fact that some substances are rapidly eliminated from the body and the time necessary to obtain a warrant for a biological sample can exceed the plasma half-life of the substance. Also, the route of administration and dose will play a determining role.

In standard practice, drug concentration in a sample can be compared to reference values to determine whether it is a therapeutic or toxic exposure. Ratios relating the parent drug to its metabolites (P/M ratios) can be useful in resolving whether an administration is acute (high P/M ratio value) or if it’s chronic. However, it is difficult to establish an accurate timeframe for exposure relying only on this data. It should be considered that even though several methods to perform toxicological tests on different biological samples have been developed [72–77], there are no validated methods to calculate the time elapsed since the last consumption of a substance (except for Cannabis) in a positive drug test [78,79], and there are few models to estimate plasmatic drug concentrations from the ones determined in other biological matrices. Some studies have focused on establishing the correlation of drug concentrations in paired samples, such as whole blood and urine [66] or blood and oral fluid [80]. However, most of them have been published for cannabis, amphetamine, and in rare cases, cocaine. These models are based on studies of controlled drug administration in voluntary subjects (most of them with history of single or multiple drug use history).

Studies reported in literature developed to solve the questions considered in the introduction, follow one of two strategies, the analysis of concentrations during elimination phase of volunteers, or controlled administration studies. Both approaches have advantages and limitations, in the first approach the main problem is to deal with a possible polydrug users and the samples might come from both occasional and frequent users; at this point is important to be aware that the chronic exposure changes pharmacokinetic parameters, complicating the adequate interpretation of these data due to poor control over the variables. On the other hand, controlled administration studies, overcome the control of variables problem, but the legal and ethical considerations in humans lead to use lower doses with respect to street doses, they cannot be used with all kinds of drugs and must be carried out on voluntary chronic users after cessation, for example, in prison inmates or rehabilitation patients. Although these studies provide valuable information to estimate windows of detection in frequent users of large amounts of drugs, seldom data for occasional users of single doses is offered.

This paper presented interesting and useful approaches for several drugs, based on specific physicochemical and pharmacological characteristics, expanding the analytical determination to other metabolites or biomarkers in different matrices in order to follow drugs to-metabolite or metabolite-to-metabolite tissue/biological fluid concentration ratios with time improving the interpretation of toxicological results. The interpretation of biomarkers should be cautious since the available information encourages the detection of more than one marker to avoid misinterpretation.

Acknowledgments

This review is part of project No. 247925 funded by the National Council for Science and Technology (CONACyT) from Mexico.

References

- Stimpfl T, Muller K, Gergov M, LeBeau M, Polettini A, et al. (2011) Laboratory Guidelines. TIAFT-Bulletin XXXI: 23-26.

- Goldstein PJ (1985) The drugs/violence nexus : A tripartite conceptual framework. J Drugs Issues 39: 493-506.

- Musshoff F, Stamer UM, Madea B (2010) Pharmacogenetics and forensic toxicology. Forensic Sci Int 203: 53-62.

- Kreuz DS, Axelrod J (1973) Delta-9-tetrahydrocannabinol: Localization in body fat. Science 179: 391-393.

- Johansson E, Norén K, Sjövall J, Halldin MM (1989) Determination of delta 1-tetrahydrocannabinol in human fat biopsies from marihuana users by gas chromatography-mass spectrometry. Biomed Chromatogr 3: 35-38.

- Johansson E, Agurell S, Hollister LE, Halldin MM (1988) Prolonged apparent half-life of delta 1-tetrahydrocannabinol in plasma of chronic marijuana users. J Pharm Pharmacol 40: 374-375.

- Leuschner JT, Harvey DJ, Bullingham RES, Paton WDM (1986) Pharmacokinetics of delta-9-tetrahydrocannabinol in rabbits following single or multiple intravenous doses. Drug Metab Dispos 14: 230-238.

- Garrett ER, Hunt CA (1977) Pharmacokinetics of delta-9-tetrahydrocannabinol in dogs. J Pharm Sci 66: 395-407.

- Anizan S, Milman G, Desrosiers N, Barnes AJ, Gorelick DA, et al (2013) Oral fluid cannabinoid concentrations following controlled smoked cannabis in chronic frequent and occasional smokers. Anal Bioanal Chem 405: 8451-8461.

- Desrosiers NA, Himes SK, Scheidweiler KB, Concheiro-Guisan M, Gorelick DA, et al (2014) Phase I and II cannabinoid disposition in blood and plasma of occasional and frequent smokers following controlled smoked cannabis. Clin Chem 60: 631-643.

- Toennes SW, Ramaekers JG, Theunissen EL, Moeller MR, Kauert GF (2008) Comparison of cannabinoid pharmacokinetic properties in occasional and heavy users smoking a marijuana or placebo joint. J Anal Toxicol 32: 470-477.

- Huestis MA, Cone EJ (1998) Differentiating new marijuana use from residual drug excretion in occasional marijuana users. J Anal Toxicol 22: 445-454.

- Lafolie P, Beck O, Blennow G, Boréus L, Borg S, et al (1991) Importance of creatinine analyses of urine when screening for abused drugs. Clin Chem 37: 1927-1931.

- Manno JE, Ferslew KE, R. MB (1984) Urine excretion patterns of cannabinoids and the clinical application of the EMIT-d.a.u. cannabinoid urine assay for substance abuse treatment. In: Agurell S, Dewey WE, Willette RE (eds.) Cannabinoids Chemical, Pharmacol. Ther Asp Academic Press, Orlando, pp: 281-290.

- Fraser AD, Worth D (1999) Urinary excretion profiles of 11-nor-9-carboxy-delta9-tetrahydrocannabinol: A delta9-THCCOOH to creatinine ratio study. J Anal Toxicol 23: 531-534.

- Fraser AD, Worth D (2003) Urinary excretion profiles of 11-nor-9-carboxy-Delta9-tetrahydrocannabinol: A Delta9-THC-COOH to creatinine ratio study #2. Forensic Sci Int 133: 26-31.

- Fraser AD, Worth D (2004) Urinary excretion profiles of 11-nor-9-carboxy-Delta9-tetrahydrocannabinol and 11-hydroxy-Delta9-THC: Cannabinoid metabolites to creatinine ratio study IV. Forensic Sci Int 143: 147-152.

- Desrosiers NA, Lee D, Concheiro-Guisan M, Scheidweiler KB, Gorelick DA, et al (2014) Urinary cannabinoid disposition in occasional and frequent smokers: is THC-glucuronide in sequential urine samples a marker of recent use in frequent smokers? Clin Chem 60: 361-372.

- Segura M, Ortuño J, Farré M, McLure J, Pujadas M, et al. (2001) 3,4-Dihydroxymethamphetamine (HHMA): A major in vivo 3,4-methylenedioxymethamphetamine (MDMA) metabolite in humans. Chem Res Toxicol 14: 1203-1208.

- De La Torre R, Farré M, Ortuño J, Mas M, Brenneisen R, et al (2000) Non-linear pharmacokinetics of MDMA (‘ecstasy’) in humans. Br J Clin Pharmacol 49: 104-109.

- Helmlin H, Bracher K, Bourquin D, Vonlanthen D, R. B (1996) Analysis of 3,4-methylenedioxymethamphetamine (MDMA) and its metabolites in plasma and urine by HPLC-DAD and GC-MS. J Anal Toxicol 20: 432-440.

- Mas M, Farré M, de la Torre R, Roset PN, Ortuño J, et al (1999) Cardiovascular and neuroendocrine effects and pharmacokinetics of 3, 4-methylenedioxymethamphetamine in humans. J Pharmacol Exp Ther 290: 136-145.

- Abraham TT, Barnes AJ, Lowe RH, Kolbrich Spargo EA, Milman G, et al (2009) Urinary MDMA, MDA, HMMA and HMA excretion following controlled MDMA administration to humans. J Anal Toxicol 33: 439-46.

- Shin HS, Donike M (1996) Stereospecific derivatization of amphetamines, phenol alkylamines and hydroxyamines and quantification of the enantiomers by capillary GC/MS. Anal Chem 68: 3015-3020.

- Beckett A, Shenoy E, Salmon J (1972) The influence of replacement of the N-ethyl group by the cyanoethyl group on the absorption, distribution and metabolism of (plus or minus)-ethylamphetamine in man. J Pharm Pharmacol 24: 194-202.

- Spitzer M, Franke B, Walter H, Buechler J, Wunderlich AP, et al (2001) Enantio-selective cognitive and brain activation effects of N-ethyl-3,4-methylenedioxyamphetamine in humans. Neuropharmacology 41: 263-271.

- Pizarro N, Ortuño J, Farré M, Hernández-López C, Pujadas M, et al (2002) Determination of MDMA and its metabolites in blood and urine by gas chromatography-mass spectrometry and analysis of enantiomers by capillary electrophoresis. J Anal Toxicol 26: 157-165.

- Schwaninger AE, Meyer MR, Barnes AJ, Kolbrich-Spargo E, Gorelick D, et al (2012) Stereoselective urinary MDMA (ecstasy) and metabolites excretion kinetics following controlled MDMA administration to humans. Biochem Pharmacol 83: 131-138.

- Fallon JK, Kicman AT, Henry JA, Milligan PJ, Cowan DA, et al (1999) Stereospecific analysis and enantiomeric disposition of 3, 4-methylenedioxymethamphetamine (Ecstasy) in humans. Clin Chem 45: 1058-69.

- Pizarro N, Farre M, Pujadas M, Peiro AM, Roset PN, et al (2004) Stereochemical analysis of 3,4-methylenedioxymethamphetamine and its main metabolites in human samples including the catechol-type metabolite (3,4-dihydroxymethamphetamine). Drug Metab Dispos 32: 1001-1007.

- Afonso L, Mohammad T, Thatai D (2007) Crack whips the heart: A review of the cardiovascular toxicity of cocaine. Am J Cardiol 100: 1040-1043.

- Jatlow P (1988) Cocaine: Analysis, pharmacokinetics, and metabolic disposition. Yale J Biol Med 61: 105-113.

- Vandevenne M, Vandenbussche H, Verstraete A (2000) Detection time of drugs of abuse in urine. Acta Clin Belg 55: 323-333.

- Cone EJ, Sampson-Cone AH, Darwin WD, Huestis MA, Oyler JM (2003) Urine testing for cocaine abuse: metabolic and excretion patterns following different routes of administration and methods for detection of false-negative results. J Anal Toxicol 27: 386-401.

- Klette KL, Poch GK, Czarny R, Lau CO (2000) Simultaneous GC-MS analysis of meta- and para-hydroxybenzoylecgonine and norbenzoylecgonine: A secondary method to corroborate cocaine ingestion using nonhydrolytic metabolites. J Anal Toxicol 24: 482-488.

- Isenschmid DS, Fischman MW, Foltin RW, Caplan YH (1992) Concentration of cocaine and metabolites in plasma of humans following intravenous administration and smoking of cocaine. J Anal Toxicol 16: 311-314.

- Jufer RA, Wstadik A, Walsh SL, Levine BS, Cone EJ (2000) Elimination of cocaine and metabolites in plasma, saliva and urine following repeated oral administration to human volunteers. J Anal Toxicol 24: 467-477.

- Scheidweiler KB, Spargo EAK, Kelly TL, Cone EJ, Barnes AJ, et al (2010) Pharmacokinetics of cocaine and metabolites in human oral fluid and correlation with plasma concentrations following controlled administration. Ther Drug Monit 32: 628-637.

- Smith ML, Shimomura ET, Summers J, Paul BD, Jenkins AJ, et al (2001) Urinary excretion profiles for total morphine, free morphine and 6-acetylmorphine following smoked and intravenous heroin. J Anal Toxicol 25: 504-514.

- Beck O, Böttcher M (2006) Paradoxical results in urine drug testing for 6-acetylmorphine and total opiates: implications for best analytical strategy. J Anal Toxicol 30: 73-79.

- Cone EJ, Welch P, Mitchell JM, Paul BD (1991) Forensic drug testing for opiates: I. Detection of 6-acetylmorphine in urine as an indicator of recent heroin exposure; drug and assay considerations and detection times. J Anal Toxicol 15: 1-7.

- Oyler JM, Cone EJ, Joseph RE, Huestis MA (2000) Identification of hydrocodone in human urine following controlled codeine administration. J Anal Toxicol 24:530-535.

- Cylwik B, Chrostek L, Szmitkowski M (2007) Non-oxidative metabolites of ethanol as markers of recent alcohol drinking. Pol Merkur Lekarski 23: 235-238.

- Helander A, Beck O (2005) Ethyl sulfate: A metabolite of ethanol in humans and a potential biomarker of acute alcohol intake. J Anal Toxicol 29: 270-274.

- De Giovanni N, Cittadini F, Martello S (2015) The usefulness of biomarkers of alcohol abuse in hair and serum carbohydrate-deficient transferrin: A case report. Drug Test Anal 7: 703-707.

- Lee D, Milman G, Barnes AJ, Goodwin RS, Hirvonen J, et al (2011) Oral fluid cannabinoids in chronic, daily cannabis smokers during sustained, monitored abstinence. Clin Chem 57: 1127-1136.

- Andås HT, Krabseth H-M, Enger A, Marcussen BN, Haneborg A-M, et al (2014) Detection time for THC in oral fluid after frequent cannabis smoking. Ther Drug Monit 36: 808-814.

- Odell MS, Frei MY, Gerostamoulos D, Chu M, Lubman DI (2015) Residual cannabis levels in blood, urine and oral fluid following heavy cannabis use. Forensic Sci Int 249: 173-180.

- Newmeyer MN, Desrosiers NA, Lee D, Mendu DR, Barnes AJ, et al (2014) Cannabinoid disposition in oral fluid after controlled cannabis smoking in frequent and occasional smokers. Drug Test Anal 6: 1002-1010.

- Reiter A, Hake J, Meissner C, Rohwer J, Friedrich HJ, et al (2001) Time of drug elimination in chronic drug abusers. Case study of 52 patients in a “low-step†detoxification ward. Forensic Sci Int 119: 248-253.

- Karschner EL, Schwope DM, Schwilke EW, Goodwin RS, Kelly DL, et al (2012) Predictive model accuracy in estimating last Δ9-tetrahydrocannabinol (THC) intake from plasma and whole blood cannabinoid concentrations in chronic, daily cannabis smokers administered sub chronic oral THC. Drug Alcohol Depend 125:313–319.

- Moolchan ET, Cone EJ, Wstadik A, Huestis MA, Preston KL (2000) Cocaine and metabolite elimination patterns in chronic cocaine users during cessation: Plasma and saliva analysis. J Anal Toxicol 24: 458-466.

- Preston KL, Epstein DH, Cone EJ, Wtsadik AT, Huestis MA, et al (2002) Urinary elimination of cocaine metabolites in chronic cocaine users during cessation. J Anal Toxicol 26: 393-400.

- Huestis MA, Barnes A, Smith ML (2005) Estimating the time of last cannabis use from plasma delta9-tetrahydrocannabinol and 11-nor-9-carboxy-delta9-tetrahydrocannabinol concentrations. Clin Chem 51: 2289-2295.

- Verstraete AG (2004) Detection times of drugs of abuse in blood, urine and oral fluid. Ther Drug Monit 26: 200-205.

- Anderson RA, Wylie FM (2005) Back-tracking calculations for alchol and drugs. In: Jason PJ, Byard RW (eds.) Encycl Forensic Leg Med Elsevier Ltd,Glasgow, pp: 261-270

- Niedbala RS, Kardos KW, Fritch DF, Kardos S, Fries T, et al (2001) Detection of marijuana use by oral fluid and urine analysis following single-dose administration of smoked and oral marijuana. J Anal Toxicol 25: 289-303.

- Musshoff F, Madea B (2006) Review of biologic matrices (urine, blood, hair) as indicators of recent or ongoing cannabis use. Ther Drug Monit 28: 155-163.

- ElSohly MA (2007) Marijuana and the cannabinoids. Springer International Publishing AG.

- Cone EJ, Huestis MA (1993) Relating blood concentrations of tetrahydrocannabinol and metabolites to pharmacologic effects and time of marijuana usage. Ther Drug Monit 15: 527-532.

- Ramaekers JG, Berghaus G, Van Laar M, Drummer OH (2004) Dose related risk of motor vehicle crashes after cannabis use. Drug Alcohol Depend 73: 109-119.

- Hartman RL, Huestis MA (2013) Cannabis effects on driving skills. Clin Chem 59: 478-492

- Kurzthaler I, Hummer M, Miller C, Sperner-Unterweger B, Günther V, et al. (1999) Effect of cannabis use on cognitive functions and driving ability. J Clin Psychiatry 60: 395-399.

- Neavyn MJ, Blohm E, Babu KM, Bird SB (2014) Medical marijuana and driving: A review. J Med Toxicol 10: 269-279.

- Huestis MA, Henningfield JE, Cone EJ (1992) Blood Cannabinoids. II. Models for the prediction of time of marijuana exposure from plasma concentrations of delta9-tetrahydrocannabinol (THC) and 11-nor-9-carboxy-delta9-tetrahydrocannabinol (THCCOOH). J Anal Toxicol 16: 283-290.

- Karschner EL, Schwope DM, Schwilke EW, Goodwin RS, Kelly DL, et al (2012) Predictive model accuracy in estimating last Δ9-tetrahydrocannabinol (THC) intake from plasma and whole blood cannabinoid concentrations in chronic, daily cannabis smokers administered sub chronic oral THC. Drug Alcohol Depend 125: 313-319.

- Cone EJ, Oyler J, Darwin WD (1997) Cocaine disposition in saliva following intravenous, intranasal and smoked administration. J Anal Toxicol 21: 465-475.

- Kolbrich EA, Barnes AJ, Gorelick DA, Boyd SJ, Cone EJ, et al (2006) Major and minor metabolites of cocaine in human plasma following controlled subcutaneous cocaine administration. J Anal Toxicol 30: 501-510.

- Dubois N, Hallet C, Seidel L, Demaret I, Luppens D, et al (2015) Estimation of the time interval between the administration of heroin and the sampling of blood in chronic inhalers. J Anal Toxicol, pp: 1-6.

- Gustavsen I, Mørland J, Bramness JG (2006) Impairment related to blood amphetamine and/or methamphetamine concentrations in suspected drugged drivers. Accid Anal Prev 38: 490-495.

- Huestis MA (2002) Cannabis (Marijuana): Effects on human behavior and performance. Forensic Sci Rev, pp: 15-60.

- Huestis M a, Smith ML (2006) Modern analytical technologies for the detection of drug abuse and doping. Drug Discov Today Technol 3: 49-57.

- Frederick DL (2012) Toxicology testing in alternative specimen matrices. Clin Lab Med 32: 467-492.

- Huestis M a, Oyler JM, Cone EJ, Wstadik a T, Schoendorfer D, et al (1999) Sweat testing for cocaine, codeine and metabolites by gas chromatography-mass spectrometry. J Chromatogr B Biomed Sci Appl 733: 247-264

- Huestis MA, Gustafson RA, Moolchan ET, Barnes A, Bourland JA, et al (2007) Cannabinoid concentrations in hair from documented cannabis users. Forensic Sci Int 169: 129-136.

- Polettini A, Cone EJ, Gorelick DA, Huestis MA (2012) Incorporation of methamphetamine and amphetamine in human hair following controlled oral methamphetamine administration. Anal Chim Acta 726: 35-43.

- Dams R, Huestis MA, Lambert WE, Murphy CM (2003) Matrix effect in bio-analysis of illicit drugs with LC-MS/MS: Influence of ionization type, sample preparation and biofluid. J Am Soc Mass Spectrom 14: 1290-1294.

- Huestis MA, Barnes A, Smith ML (2005) Estimating the time of last cannabis use from plasma delta9-tetrahydrocannabinol and 11-nor-9-carboxy-delta9-tetrahydrocannabinol concentrations. Clin Chem 51: 2289-2295.

- Lowe RH, Abraham TT, Darwin WD, Herning R, Cadet JL, et al (2009) Extended urinary Delta9-tetrahydrocannabinol excretion in chronic cannabis users precludes use as a biomarker of new drug exposure. Drug Alcohol Depend 105: 24-32.

- Gjerde H, Verstraete AG (2011) Estimating equivalent cut-off thresholds for drugs in blood and oral fluid using prevalence regression: A study of tetrahydrocannabinol and amphetamine. Forensic Sci Int 212: e26-30.

Spanish

Spanish  Chinese

Chinese  Russian

Russian  German

German  French

French  Japanese

Japanese  Portuguese

Portuguese  Hindi

Hindi